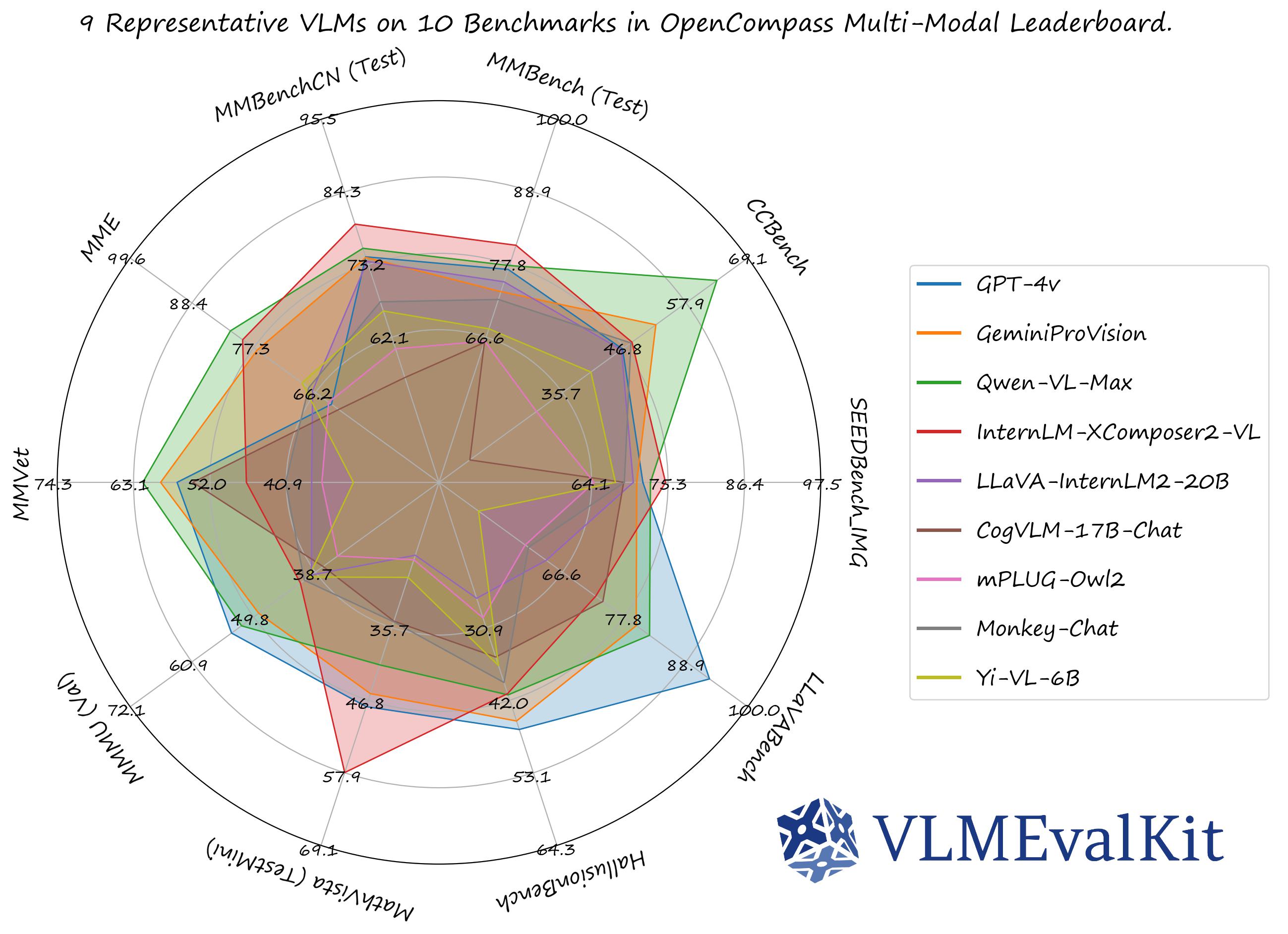

A Toolkit for Evaluating Large Vision-Language Models.

🏆 OC Learderboard • 🏗️Quickstart • 📊Datasets & Models • 🛠️Development

🤗 HF Leaderboard • 🤗 Evaluation Records • 🤗 HF Video Leaderboard •

🔊 Discord • 📝 Report • 🎯Goal • 🖊️Citation

VLMEvalKit (the python package name is vlmeval) is an open-source evaluation toolkit of large vision-language models (LVLMs). It enables one-command evaluation of LVLMs on various benchmarks, without the heavy workload of data preparation under multiple repositories. In VLMEvalKit, we adopt generation-based evaluation for all LVLMs, and provide the evaluation results obtained with both exact matching and LLM-based answer extraction.

We have presented a comprehensive survey on the evaluation of large multi-modality models, jointly with MME Team and LMMs-Lab 🔥🔥🔥

- [2025-02-20] Supported Models: InternVL2.5 series, QwenVL2.5 series, QVQ-72B, Doubao-VL, Janus-Pro-7B, MiniCPM-o-2.6, InternVL2-MPO, LLaVA-CoT, Hunyuan-Standard-Vision, Ovis2, Valley, SAIL-VL, Ross, Long-VITA, EMU3, SmolVLM. Supported Benchmarks: MMMU-Pro, WeMath, 3DSRBench, LogicVista, VL-RewardBench, CC-OCR, CG-Bench, CMMMU, WorldSense. Please refer to VLMEvalKit Features for more details. Thanks to all contributors 🔥🔥🔥

- [2024-12-11] Supported NaturalBench, a vision-centric VQA benchmark (NeurIPS'24) that challenges vision-language models with simple questions about natural imagery.

- [2024-12-02] Supported VisOnlyQA, a benchmark for evaluating the visual perception capabilities 🔥🔥🔥

- [2024-11-26] Supported Ovis1.6-Gemma2-27B, thanks to runninglsy 🔥🔥🔥

- [2024-11-25] Create a new flag

VLMEVALKIT_USE_MODELSCOPE. By setting this environment variable, you can download the video benchmarks supported from modelscope 🔥🔥🔥 - [2024-11-25] Supported VizWiz benchmark 🔥🔥🔥

- [2024-11-22] Supported the inference of MMGenBench, thanks lerogo 🔥🔥🔥

- [2024-11-22] Supported Dynamath, a multimodal math benchmark comprising of 501 SEED problems and 10 variants generated based on random seeds. The benchmark can be used to measure the robustness of MLLMs in multi-modal math solving 🔥🔥🔥

- [2024-11-21] Integrated a new config system to enable more flexible evaluation settings. Check the Document or run

python run.py --helpfor more details 🔥🔥🔥 - [2024-11-21] Supported QSpatial, a multimodal benchmark for Quantitative Spatial Reasoning (determine the size / distance, e.g.), thanks andrewliao11 for providing the official support 🔥🔥🔥

- [2024-11-21] Supported MM-Math, a new multimodal math benchmark comprising of ~6K middle school multi-modal reasoning math problems. GPT-4o-20240806 achieces 22.5% accuracy on this benchmark 🔥🔥🔥

See [QuickStart | 快速开始] for a quick start guide.

The performance numbers on our official multi-modal leaderboards can be downloaded from here!

OpenVLM Leaderboard: Download All DETAILED Results.

Check Supported Benchmarks Tab in VLMEvalKit Features to view all supported image & video benchmarks (70+).

Check Supported LMMs Tab in VLMEvalKit Features to view all supported LMMs, including commercial APIs, open-source models, and more (200+).

Transformers Version Recommendation:

Note that some VLMs may not be able to run under certain transformer versions, we recommend the following settings to evaluate each VLM:

- Please use

transformers==4.33.0for:Qwen series,Monkey series,InternLM-XComposer Series,mPLUG-Owl2,OpenFlamingo v2,IDEFICS series,VisualGLM,MMAlaya,ShareCaptioner,MiniGPT-4 series,InstructBLIP series,PandaGPT,VXVERSE. - Please use

transformers==4.36.2for:Moondream1. - Please use

transformers==4.37.0for:LLaVA series,ShareGPT4V series,TransCore-M,LLaVA (XTuner),CogVLM Series,EMU2 Series,Yi-VL Series,MiniCPM-[V1/V2],OmniLMM-12B,DeepSeek-VL series,InternVL series,Cambrian Series,VILA Series,Llama-3-MixSenseV1_1,Parrot-7B,PLLaVA Series. - Please use

transformers==4.40.0for:IDEFICS2,Bunny-Llama3,MiniCPM-Llama3-V2.5,360VL-70B,Phi-3-Vision,WeMM. - Please use

transformers==4.42.0for:AKI. - Please use

transformers==4.44.0for:Moondream2,H2OVL series. - Please use

transformers==4.45.0for:Aria. - Please use

transformers==latestfor:LLaVA-Next series,PaliGemma-3B,Chameleon series,Video-LLaVA-7B-HF,Ovis series,Mantis series,MiniCPM-V2.6,OmChat-v2.0-13B-sinlge-beta,Idefics-3,GLM-4v-9B,VideoChat2-HD,RBDash_72b,Llama-3.2 series,Kosmos series.

Torchvision Version Recommendation:

Note that some VLMs may not be able to run under certain torchvision versions, we recommend the following settings to evaluate each VLM:

- Please use

torchvision>=0.16for:Moondream seriesandAria

Flash-attn Version Recommendation:

Note that some VLMs may not be able to run under certain flash-attention versions, we recommend the following settings to evaluate each VLM:

- Please use

pip install flash-attn --no-build-isolationfor:Aria

# Demo

from vlmeval.config import supported_VLM

model = supported_VLM['idefics_9b_instruct']()

# Forward Single Image

ret = model.generate(['assets/apple.jpg', 'What is in this image?'])

print(ret) # The image features a red apple with a leaf on it.

# Forward Multiple Images

ret = model.generate(['assets/apple.jpg', 'assets/apple.jpg', 'How many apples are there in the provided images? '])

print(ret) # There are two apples in the provided images.To develop custom benchmarks, VLMs, or simply contribute other codes to VLMEvalKit, please refer to [Development_Guide | 开发指南].

Call for contributions

To promote the contribution from the community and share the corresponding credit (in the next report update):

- All Contributions will be acknowledged in the report.

- Contributors with 3 or more major contributions (implementing an MLLM, benchmark, or major feature) can join the author list of VLMEvalKit Technical Report on ArXiv. Eligible contributors can create an issue or dm kennyutc in VLMEvalKit Discord Channel.

Here is a contributor list we curated based on the records.